For the past eighteen months, almost every maritime organisation I have worked with has wanted to adopt AI and been quietly stuck.

The reasons look different on the surface, with different sectors, different regulators, different IT teams. Underneath, the gridlock has been the same. The organisation lives inside Microsoft 365. The IT policies that protect them were written before generative AI existed. Every promising AI tool has sat outside the perimeter, requiring a new sub-processor approval, a new data flow review, a new vendor risk assessment. None of that work could happen at the speed the technology was moving.

The other side of that gridlock is rarely discussed but matters as much. The independent AI advisory and build community has been just as stuck. In the practitioner conversations I have been part of over the past year, the same position has come up again and again: experienced builders avoid enterprise clients locked into Microsoft, because the tools available inside that ecosystem could not do meaningful agentic work. Both sides have been waiting on the other.

That is what has now changed.

I am writing this on a Saturday morning, three days after taking part in a global Microsoft event building agents inside the Microsoft ecosystem. I have walked out of a lot of AI events over the past two years. This one sits differently. The agents I was building did things I did not believe were possible inside a Microsoft tenant a year ago, and they did them under exactly the kind of governance perimeter our industry needs. I am now halfway through the agent build that came out of it, which I will share here when it is ready for feedback.

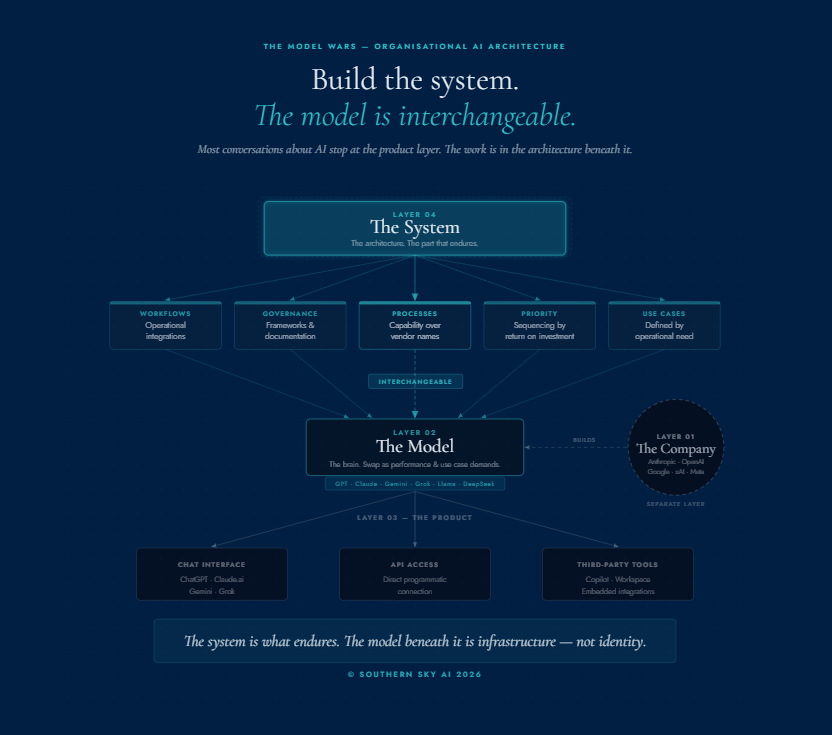

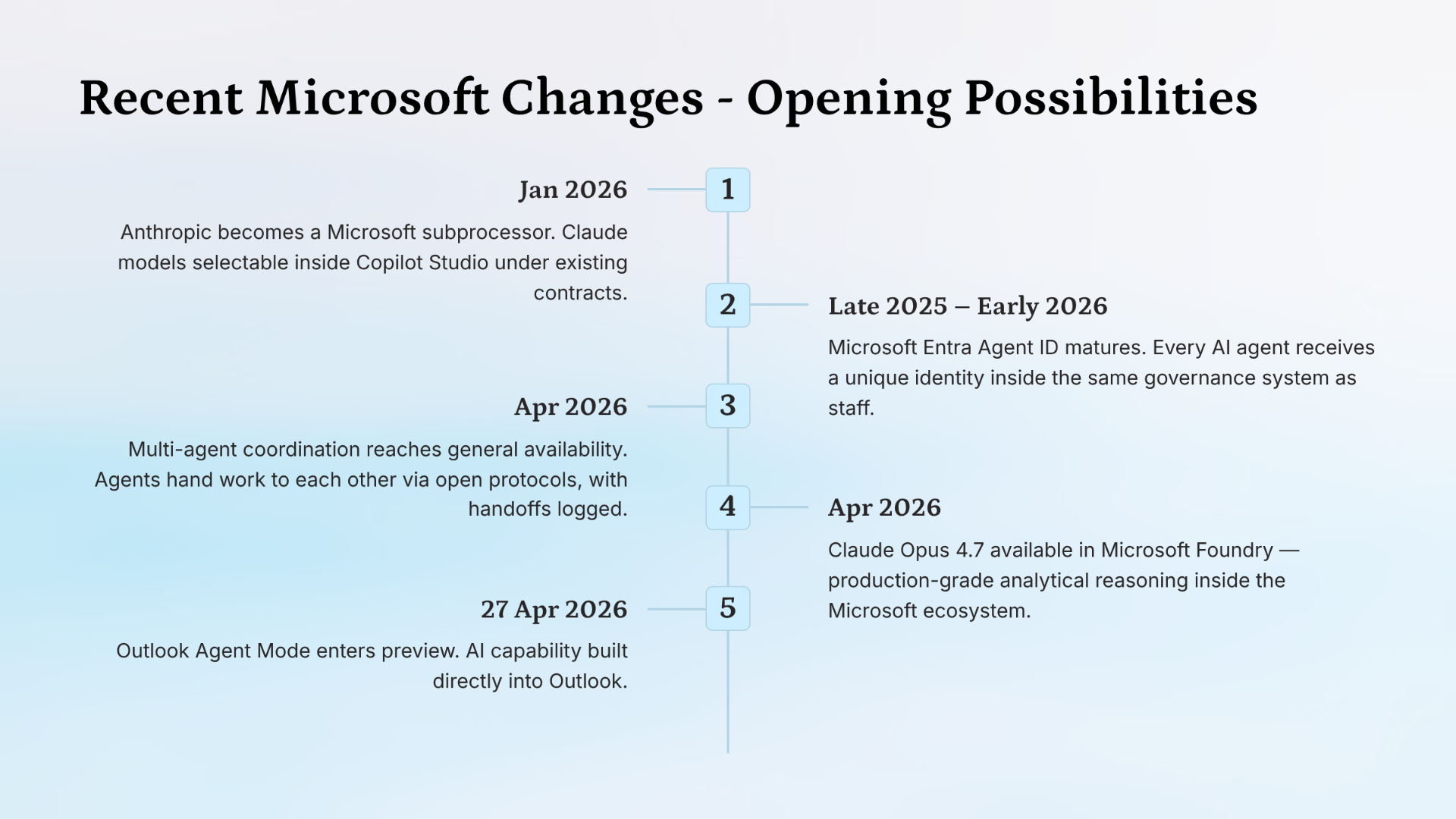

Five things have shifted between September 2025 and last week. None of them are dramatic in isolation. Taken together, they have moved the procurement gate that has been holding our industry back, and most of the leaders I have spoken with this year have not yet seen the picture come together.

I want to walk through them in plain language, because the word "Copilot" carries a lot of history, and the version most of us have evaluated is not the version doing the work that matters now. That distinction is recent. It is also the reason this article exists.

For maritime companies that have been Microsoft-locked and AI-blocked, the gate has moved.

Recent Microsoft changes timeline showing the shifts between October 2025 and May 2026 that reopened the AI conversation for maritime organisations.

What "Copilot" means now

The naming inside the Microsoft suite has not helped anyone. Three different things sit underneath the word "Copilot," and they do quite different work. Twelve months ago, the only one most of us had a reason to evaluate was the first one. The other two have changed substantially since then.

The first is the consumer layer

Microsoft 365 Copilot, the assistant that lives in the sidebar of Word, Outlook, Excel, and Teams. It drafts emails, summarises documents, answers questions about a meeting, surfaces information from across the tenancy. It is useful in the way a good intern is useful. It is also the version most maritime leaders mean when they tell me they have already looked at Copilot. I understand why. It has been the visible face of the product for years, and for most of that time it was thin enough that a serious evaluation could be completed in a week.

The second layer is Microsoft Copilot Studio

A separate product, accessed through a separate web address, with a separate purpose. This is where agents are built: autonomous and semi-autonomous workflows that take actions, query systems, draft outputs, route results, and integrate with the platforms an organisation already pays for. Copilot Studio lets the maker choose which AI model to use for each task, including Anthropic's Claude. Critically, agents built here run inside the same Microsoft tenancy as the data they work with. No new sub-processor. No new data flow leaving the perimeter. This is the layer doing the work that has changed the conversation, and it is the layer most leaders have never opened.

The third layer is Microsoft Foundry

Formerly Azure AI Foundry, rebranded at Microsoft Ignite in November. It is the developer environment for building production-grade multi-agent systems with deeper control over latency, compute, orchestration, and direct model access. Most maritime organisations will not operate at this layer directly, but it is the platform underneath the agents the organisation will eventually consume, which is why the layer exists in this article at all.

The agent-building layer was not a viable option twelve months ago. It is now.

If you looked at the consumer layer twelve months ago and concluded Copilot was not the answer for your organisation, you were reading the layer accurately at the time. The agent-building layer has changed substantially since then, and the rest of this article is an invitation to take a fresh look at the part that has moved.

› Read more on Microsoft: Microsoft 365 Copilot overview, Microsoft Copilot Studio overview, Microsoft Foundry overview.

Anthropic became a Microsoft sub-processor

Late last year, on 8 December 2025, something happened inside the Microsoft 365 admin centre that did not make the news cycle outside the Microsoft community but mattered more than any model release did. Microsoft replaced the old "accept Anthropic's separate commercial terms" toggle with a single new control: AI providers operating as Microsoft subprocessors. From that point, Anthropic operates inside Microsoft tools under the existing Microsoft Product Terms, the existing Microsoft Data Protection Addendum, the existing Enterprise Data Protection commitments, and the existing Microsoft Customer Copyright Commitment. On 6 January 2026, the toggle defaulted to on for most commercial cloud tenants. EU, UK, and EFTA tenants remain off-by-default and have a separate, more granular control added in early April.

I want to make sure the practical meaning of this is not lost in the procedural language. Before December, accessing Claude inside Microsoft tools required a customer to sign a separate Anthropic agreement, accept a separate data protection addendum, and approve a new sub-processor. In a regulated maritime organisation, every one of those is a procurement-blocking event. After December, none of that is required. Anthropic appears in the existing Microsoft Service Trust Portal sub-processor list. The decision shifts from a complex cross-vendor negotiation to a routine governance review against contractual coverage the organisation already has in place.

The decision shifts from a complex cross-vendor negotiation to a routine governance review against contractual coverage the organisation already has in place.

There is a caveat for European customers. Anthropic-routed traffic is excluded from Microsoft's EU Data Boundary and from the in-country processing commitments that apply to OpenAI traffic. That is a real consideration for European registries, fleet managers, and operators with strict residency obligations, and it deserves to sit at the top of the governance review rather than the bottom. Government and sovereign clouds are a separate matter again. Anthropic is not available there, and there is no toggle to change.

For most commercial maritime organisations outside Europe, though, this is the unblocking event. A Cayman-flagged fleet manager, an Australian marine operator, a US-based shoreside management group: each operates inside the same Microsoft tenancy architecture, and for each of them the most important AI policy decision in 2026 is whether to enable Anthropic models inside their tenant, and on what terms. That decision is a governance review, not a procurement project.

› Read more on Microsoft: Anthropic as a subprocessor for Microsoft Online Services, Microsoft Service Trust Portal, Microsoft Customer Copyright Commitment.

Claude arrived inside both layers of Microsoft

There are two events here, separated by about five weeks, that together explain why the model conversation has changed.

On 9 March 2026, Microsoft launched Copilot Cowork, an autonomous agent that takes cross-application actions across Word, Excel, Outlook, and Teams, grounded in the organisation's tenant data. Cowork ships with Anthropic's Claude as a model option, alongside OpenAI. This is the part most M365 users I speak to have not registered. They saw the November partnership headlines, filed it as abstract news, and missed the actual ship date. If you use Microsoft 365 Copilot, you can already select Claude as the model behind your Cowork experience. The data stays inside the tenancy. The model running on it is your choice.

On 16 April 2026, Anthropic released Claude Opus 4.7. The same day, Microsoft made it available across Microsoft 365 Copilot Cowork, Copilot Studio early-release cycle environments, Copilot in Excel, and Microsoft Foundry. Claude Sonnet 4.5 and 4.6 and Claude Opus 4.5 and 4.6 are also selectable. OpenAI fallback is automatic if Anthropic is disabled.

The practical shift is that twelve months ago, the choice inside Microsoft was OpenAI or nothing. Today, the same Microsoft tenant that already carries the organisation's documents, email, and Teams traffic can run Claude Opus 4.7 against that data. At the consumer layer, Cowork brings Claude into the surfaces leaders already use every day. At the agent layer, Copilot Studio lets builders configure the model per task: reasoning-heavy analysis on Opus 4.7, faster operations on Sonnet 4.6, lightweight queries on smaller models. Model choice has become a configuration decision rather than a vendor commitment.

Model choice has become a configuration decision rather than a vendor commitment.

For a maritime organisation building an agent that classifies port-state-control correspondence, drafts technical responses, or processes flag-state guidance, model choice matters. A reasoning-heavy use case grounded in regulatory text performs differently on Claude Opus than on a smaller model. The ability to make that choice inside the existing Microsoft contract is the difference between a six-month procurement cycle and a fortnight of build work.

› Read more on Microsoft: Microsoft Copilot Cowork, Anthropic models in Microsoft 365 Copilot and Copilot Studio, Available today: Anthropic Claude Opus 4.7.

The agent layer connects to over 1,400 systems

This is the one I find most useful when I am sitting across from a maritime executive trying to explain why this matters. The maritime technology stack is famously fragmented. Fleet management systems. Crew management systems. Compliance and ISM platforms. Charter management. Port agency. Surveying. Accounting. Document control. Each one is a separate platform with its own login, its own data, its own contract, its own integration history. The most common operational question I hear, in some variant, is whether the AI can see across all of these at once.

The architectural answer to that question now exists, and it is called the Model Context Protocol, usually shortened to MCP. It is an open standard for connecting AI models to tools and data sources. Microsoft brought MCP to general availability inside Copilot Studio at Ignite in November. By March, MCP Apps shipped with rich UI rendering. By April, custom MCP servers entered the Copilot Studio roadmap as a Wave 1 release. Combined with Microsoft's Power Platform connector library, the official figure for system coverage is now over 1,400 connected systems, including Salesforce, ServiceNow, Confluence, GitHub, Box, Databricks, Miro, and the Microsoft Graph itself.

What this means in practice is that an agent built in Copilot Studio is no longer limited to what lives inside SharePoint or Outlook. An agent can query a vendor management system, retrieve data from a fleet platform, surface the relevant clause from a charter agreement, and route the result into Outlook for human review. All through governed connections that inherit Power Platform data loss prevention policies, virtual network rules, and authentication controls. The agent does not pull data out of the tenancy. The data stays where it lives, surfaced through approved pathways.

Microsoft has spent the last year quietly reframing Copilot Studio from "an AI tool you adopt" into "an operational layer across the platforms you already pay for.

The maritime stack does not need to be rebuilt for this to work. It needs to be connected. There is a meaningful difference between those two propositions.

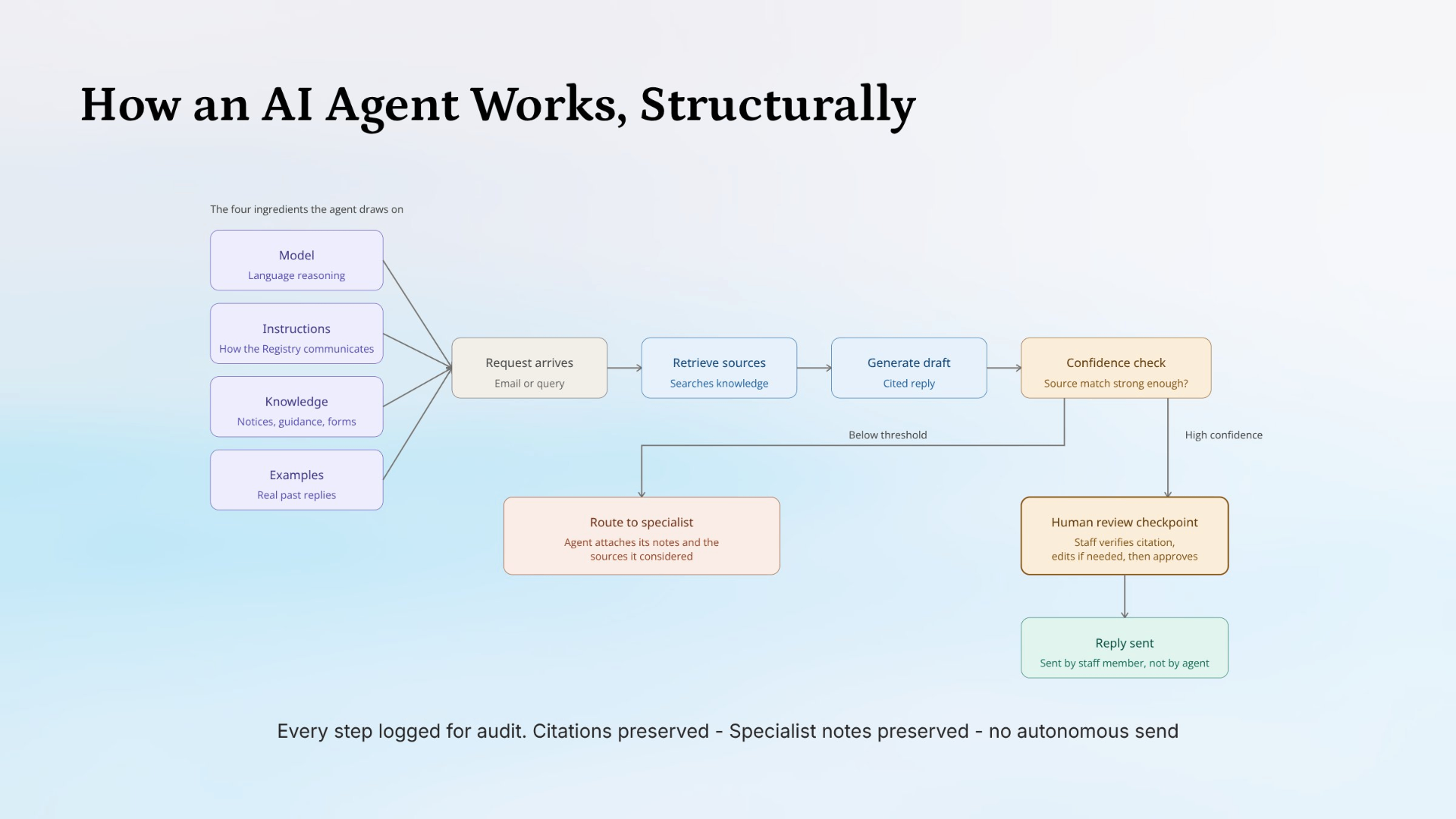

What one of these agents looks like

It is one thing to talk about the agent layer in the abstract. It helps to walk through what one of these agents does, mechanically, end-to-end. The diagram below shows the structure of a working inbox triage agent, the kind I am building inside Copilot Studio for maritime use cases right now.

How an agent is built, mechanically. The four ingredients on the left sit inside Copilot Studio. The flow on the right runs every time an email arrives.

Reading the diagram from left to right: the agent is built from four ingredients. A model handles the language reasoning (Claude Opus 4.7, GPT-5, or whichever model is chosen for the task). Instructions tell the agent how the organisation communicates and what tone to use. Knowledge gives the agent access to the curated documents it draws from, in this case notices, guidance, and forms held in SharePoint. Examples give the agent a set of real past replies to learn the institutional voice from.

The workflow runs every time an email arrives. The agent retrieves the relevant sources from the knowledge base, generates a cited draft reply, then runs a confidence check on its own output. If the source match is strong, the draft moves to a human review checkpoint where staff verify the citation, edit if needed, and approve. If the source match is below threshold, the agent does not guess. It routes the matter to a specialist with its notes and the sources it considered already attached, so the specialist starts the work several steps ahead of where they would have started without it.

The reply is sent by a staff member, not by the agent. Every step is logged for audit. Citations are preserved. Specialist notes are preserved. There is no autonomous send.

The agent does the volume work. The staff member does the judgement work. The audit trail captures both.

That last point matters more than any other element of the design. For maritime organisations operating with serious regulatory obligations, an agent that sends correspondence on its own behalf is a different governance proposition from one that drafts work for a human to review and own. Copilot Studio supports both patterns. The pattern in the diagram is the one I keep recommending for regulated environments, because it puts AI inside the workflow without removing the human signature from the output.

This is one workflow. The same architectural pattern (four ingredients on the left, governed workflow with a human checkpoint on the right) extends to other functions inside the same organisation: contract review, document drafting, regulatory mapping, technical query response. One institutional pattern for AI, applied consistently, across functions.

› Read more on Microsoft: Model Context Protocol in Copilot Studio, Power Platform connector reference, Build agents with Copilot Studio.

Microsoft Agent 365 went live last week

On 1 May 2026, eight days before this article, Microsoft Agent 365 went generally available. It is the unified control plane for every agent running inside a Microsoft tenant: agents built in Copilot Studio, agents built in Foundry, agents shipped by Microsoft, and agents from third parties. Agent 365 provides an agent registry, a Microsoft Entra Agent ID per agent, network-level access controls, data loss prevention integration with Microsoft Defender, prompt-injection blocks, a Shadow AI page for unauthorised agent detection, and audit log integration with Microsoft Purview.

For organisations that do not operate inside high-compliance environments, this might feel like infrastructure plumbing. For maritime organisations, it is the missing piece that makes the rest of the story deployable. Until Agent 365, an organisation building or buying multiple agents had no single place to govern them. Each agent lived in its own surface, with its own access logic and audit trail. Agent 365 consolidates that into a single governance plane.

ISM Section 6 already requires documented oversight of personnel and systems. AI use sits inside that obligation whether the organisation has formalised it or not. Agent 365 is the technical layer that makes ISM-aligned AI governance documentable in a way an external auditor will recognise.

That is not a marketing claim. It is the practical reason scaled AI adoption inside maritime organisations becomes possible during 2026 in a way it was not possible in 2025.

› Read more on Microsoft: Microsoft Agent 365, Microsoft Entra Agent ID, Microsoft Purview for AI.

A note on what this costs

This question comes up in every one of these conversations and it deserves a direct answer.

Inside Copilot Studio, agent operations are billed in Copilot Credits, Microsoft's renamed unit of consumption since September 2025. The pricing structure is straightforward. Two hundred dollars per month for a tenant-pooled capacity pack of 25,000 credits, or one cent pay-as-you-go through an Azure subscription. For users already licensed for Microsoft 365 Copilot at thirty dollars per user per month, agent operations consumed inside Microsoft 365 surfaces (Teams, SharePoint, Outlook) are zero-rated. External-channel deployment, autonomous trigger agents, and reasoning-model orchestration draw from chargeable capacity.

Three platforms come up when leaders ask how this compares. Each has a different commercial structure, and direct unit-by-unit comparison is misleading. The honest comparison is at the use-case level.

Going direct to Anthropic via API, Claude Opus 4.7 sits at approximately five dollars per million input tokens and twenty-five dollars per million output tokens. Sonnet 4.6 is around three input and fifteen output. For an agent processing high volumes of moderately complex text, that token-level pricing typically produces a lower headline cost than Microsoft's per-message billing. The trade-off is that the organisation carries the integration work, the data flow review, the new vendor relationship, and the governance perimeter for a system sitting outside the existing Microsoft contract.

OpenAI direct via API has a similar shape. GPT-5 pricing sits in a comparable range. Same trade-off. Same procurement work required.

Microsoft Copilot Studio, measured in isolation, has a higher per-message cost. Measured against the existing Microsoft 365 license, the existing tenancy, the existing Purview controls, the existing audit trail, and the existing IT governance work, the total cost profile is different. For most maritime organisations already operating inside Microsoft 365, the marginal cost of an internal agent built in Copilot Studio is a small fraction of what the same agent would cost to deploy through a direct API integration once the procurement, security review, and governance work are added.

For a typical maritime use case (an inbox triage agent processing several hundred technical emails per day, grounded in tenant data, with a human reviewing the output), the dominant cost is not the AI in any of the three platforms. It is the staff time the agent is replacing. Selecting the platform on the basis of headline token price is the wrong frame. Selecting the platform on the basis of where the work can get approved and deployed is the right one.

If you want to model your own usage, Microsoft has an official Copilot Studio Agent Consumption Estimator. It is the tool I use when I am scoping a build, and it is more reliable than any number I could put in this article.

› Read more on Microsoft: Microsoft 365 Copilot pricing, Copilot Studio billing and capacity, Copilot Studio Agent Usage Estimator.

Where this leaves us

For maritime organisations operating with serious accountability and risk requirements, these changes matter more than for less regulated sectors. Registries, fleet managers, marina operators, technical managers all sit inside the same gravitational pull. The procurement gate has not lowered. The compliance and audit obligations have not eased. What has changed is that organisations now have an agent-building layer that operates within the perimeter they already maintain.

The governance work still needs to be done. The AI use policy still needs to be written. The data classifications still need to be defined. The decisions still need to be documented.

What has changed is that organisations no longer have to leave their existing technology environment to do the work.

I am currently building agents inside Microsoft Copilot Studio across several maritime use cases, including a technical inbox triage agent in a registry environment. The verdict on production performance will come in a few months, once builds have been live long enough to measure properly. What I can say now is that I have spent eighteen months telling maritime leaders the structural fit was not yet there. The structural fit is now there. That is a real change, and it is the reason this article exists.

If your organisation has been Microsoft-locked and AI-blocked as a consequence, and you would not be reading an article like this if some version of that was not the case, the conversation has now changed. Not next quarter. Now.

The gate that has been blocking adoption did not break. It moved.

Kristina Agustin is the Founder and Principal Digital Navigator of Southern Sky AI, a structured AI adoption practice for maritime leaders. She has 20 years of maritime operations experience, is an admitted Lawyer, and holds AWS Certified AI Practitioner and IWAI Certified AI Consultant credentials.

Further Reading

Microsoft Copilot platform

Microsoft 365 Copilot overview: learn.microsoft.com/copilot/microsoft-365/microsoft-365-copilot-overview

Microsoft Copilot Studio overview: learn.microsoft.com/microsoft-copilot-studio/fundamentals-what-is-copilot-studio

Build agents with Copilot Studio: learn.microsoft.com/microsoft-copilot-studio/fundamentals-get-started

Microsoft Foundry overview: learn.microsoft.com/azure/ai-foundry/what-is-azure-ai-foundry

Microsoft Copilot Cowork: microsoft.com/microsoft-365/copilot/cowork

What's new in Microsoft Copilot Studio: learn.microsoft.com/microsoft-copilot-studio/whats-new

Microsoft 365 Copilot blog (Tech Community): techcommunity.microsoft.com/blog/microsoft365copilotblog

Anthropic and Claude inside Microsoft

Claude now available in Microsoft Foundry and Microsoft 365 Copilot — Anthropic announcement: anthropic.com/news/claude-in-microsoft-foundry

Anthropic as a subprocessor for Microsoft Online Services: learn.microsoft.com/copilot/microsoft-365/connect-to-ai-subprocessor

Use Anthropic models in Microsoft 365 Copilot: learn.microsoft.com/copilot/microsoft-365/microsoft-365-copilot-anthropic

Governance, security, and compliance

Microsoft Service Trust Portal: servicetrust.microsoft.com

Microsoft Customer Copyright Commitment: learn.microsoft.com/legal/cognitive-services/openai/customer-copyright-commitment

Microsoft Agent 365: microsoft.com/microsoft-365/agent-365

Microsoft Entra Agent ID: learn.microsoft.com/entra/identity/agent-id/agent-id-overview

Microsoft Purview for AI: learn.microsoft.com/purview/ai-microsoft-purview

Agent extensibility

Model Context Protocol in Copilot Studio: learn.microsoft.com/microsoft-copilot-studio/agent-extend-action-mcp

Power Platform connector reference: learn.microsoft.com/connectors/connector-reference

Pricing and licensing

Microsoft 365 Copilot pricing: microsoft.com/microsoft-365/copilot/pricing

Copilot Studio billing and capacity: learn.microsoft.com/microsoft-copilot-studio/requirements-licensing

Copilot Studio Agent Usage Estimator: learn.microsoft.com/microsoft-copilot-studio/agent-usage-estimator