In February 2026, Wall Street wiped roughly $285 billion from the valuations of SaaS companies in a single 48-hour window (Jefferies, 2026). Major SaaS businesses took share price hits in the order of 20 percent. The commentariat named the moment the SaaSpocalypse. The trigger was a growing belief among investors that artificial intelligence had quietly altered something fundamental about the software industry: if anyone can now build custom software through natural language prompts, the assumption that every business needs to pay for a rigid, off-the-shelf SaaS product at $50 to $200 per seat per month no longer holds in the same way.

The term driving the conversation is vibe coding. It was coined by Andrej Karpathy, a co-founder of OpenAI, who described the practice of building software by describing what you want in plain language and letting the model generate the code. The phrase was originally light-hearted. Eighteen months later, vibe coding sits at the centre of a $4.7 billion market, an estimated 41 percent of all code is now AI-generated, and 63 percent of vibe coding users have no formal development background (Taskade, 2026).

The cost of building software has fallen sharply. The standards for governing it have to rise to meet that shift.

The cost of building software has fallen sharply, which is what the SaaSpocalypse and the rise of vibe coding are really signalling. The standards for governing software have to rise to meet that shift, both at the vendor level and inside organisations using these tools without realising what they are building.

What Vibe Coding Is

Vibe coding is the practice of building software by describing the outcome in natural language to an AI model, which then writes the underlying code. Platforms like Lovable, Bolt, Cursor, and Replit have made this accessible to people with no formal development background. A non-technical founder can now describe a customer portal in plain English and have a working prototype in front of them within an hour.

The capability shift is real. It is not a marketing claim.

I work inside this layer daily. I hold Diamond tier status with Lovable, which is the highest practitioner credential the platform issues. The website you are reading this article on was built in Lovable and is backed by Cloudflare. The Compass AI Navigator portal we use with active clients was built in Lovable, backed by Supabase (database), deployed through Cloudflare. I spent the most recent Mother's Day weekend competing in a Lovable hackathon with a defining constraint: build an end-to-end working application without typing a single line of input, pairing the platform with Wispr Flow voice transcription for every prompt.

Vibe coding collapses the distance between an idea and a working prototype. A single operator can test concepts, iterate on positioning, and ship interfaces that would previously have required a development team and a four-month timeline, or much longer. Lovable has recently added native design refinement and Answer Engine Optimisation tooling, which means the architectural decisions I previously had to direct the platform into making, like generating fully crawlable static HTML for search engines and large language models, are now exposed in the platform itself.

The capability has limits. A prototype built by someone working alone, with no review, no security consideration, no understanding of what dependencies the model has pulled in, and no plan for what happens when something breaks, is not a production system. The same tool, used by someone who knows what to interrogate, paired with proper review discipline, security scanning, and a structured deployment path, can produce assets that organisations rely on. The discipline around the tool decides whether the output is fit for production.

Why AI-Generated Code Needs a Human in the Loop

The intuition that AI removes human error is reasonable, and it is also wrong in a specific way.

Large language models generate code by predicting the most likely next token based on patterns they have seen across enormous bodies of public code. They are excellent at producing code that looks correct. They are less good at producing code that is correct for a specific context the model has not been given enough information about. The model does not know your environment, your data, your security requirements, your existing system, or what the code is supposed to do downstream from where the prompt ends.

In December 2025, the code review platform CodeRabbit released a study analysing 470 real-world pull requests across open source projects (CodeRabbit, 2025). AI-generated code contained approximately 1.7 times more issues on average than human-written code. Logic and correctness errors rose by 75 percent. Security vulnerabilities increased by between 1.5 and 2 times, with cross-site scripting vulnerabilities appearing 2.74 times more often. Improper password handling was 1.88 times more likely. Insecure object references were 1.91 times more likely.

The findings reflect the gap. When AI writes code without a person who understands the context reviewing it, the model will produce:

- -Functions that work in isolation but make incorrect assumptions about what the rest of the system is doing

- -Security patterns that are common in training data but inappropriate for the specific application

- -Code that pulls in dependencies the model has seen before but that are not the right fit for the project

- -Logic that handles the most common case correctly but fails on edge cases the prompt did not describe

A human developer with experience would catch these. An AI without a human in the loop generally will not.

The study is not saying AI-generated code is bad. It is saying AI-generated code without review is measurably more defect-prone than human-written code with review. That distinction is the entire governance point.

What Happens Without Review

In late January 2026, an AI agent social network called Moltbook launched after its founder publicly stated he "didn't write one line of code. " Three days later, security firm Wiz discovered that a misconfigured Supabase database had exposed 1.5 million API authentication tokens, 35,000 email addresses, and several thousand private messages (Wiz Research, 2026). The breach echoes the wider pattern explored in the AI security landmark we covered earlier this year.

The breach happened for a specific reason. The model generated a Supabase backend with the public API key embedded in client-side JavaScript, which is a normal and expected configuration. What made it catastrophic was that Row Level Security on the database was disabled. With Row Level Security properly configured, the public key is harmless. Without it, that single key granted full database access to anyone who inspected the page source.

The code was not the failure. The configuration was the failure.

The code was not the failure. The configuration was the failure. Nobody with security awareness reviewed what the model had built before it went live. That is a governance gap, not a tool problem.

Where the SaaSpocalypse Hits Hardest

The SaaSpocalypse headline collapses an important distinction. SaaS is a broad category, and the impact of vibe coding sits unevenly across it.

Pure single-process SaaS products, the kind where a user pays a per-seat subscription for one focused capability that a vibe coding tool could plausibly reproduce in a weekend, are the most exposed. Calendly for scheduling. Typeform for forms. Loom for video recording. Linktree for link aggregation. Buffer for social media scheduling. The common pattern is a single workflow, a per-seat subscription, no significant integration depth, and no domain expertise built into the product. The entire value proposition lives inside the software itself.

SaaS businesses that pair their platform with a meaningful professional services component sit in a more resilient position. The platform is part of the offer, not the whole of it. The relationships, the implementation expertise, the configuration consulting, the people doing the work that no model can yet do in context, are what compound. These businesses are not immune to pressure. They are structurally better positioned to absorb it.

Enterprise software with deep regulatory, integration, or domain complexity sits in the most defensible position. Maritime examples make this concrete. Global logistics platforms with thousands of country-specific regulatory integrations. Classification society reporting systems from DNV, Lloyd's Register, and Bureau Veritas. Fleet management software that integrates with sensor data and regulatory frameworks. None of these are products a non-developer can replicate in an afternoon. The moat is the complexity itself.

The SaaS conversation is becoming a more discerning conversation.

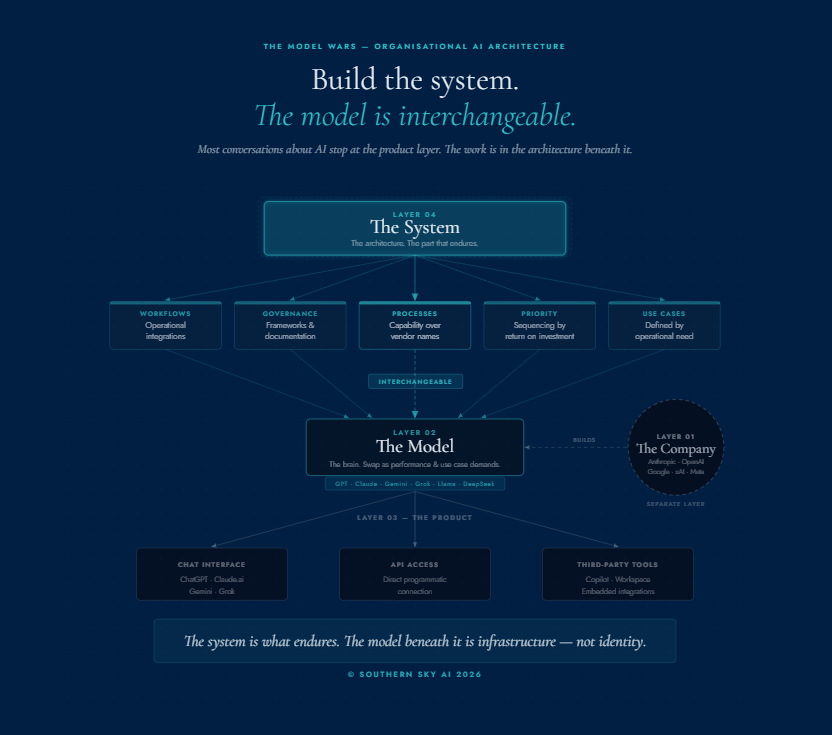

What This Means One Layer Up

A version of the same dynamic is now playing out inside organisations, at a smaller scale and quieter pace than the public market story. Individual employees are using AI to build tools their leadership teams have not yet seen, mapped, or governed.

Sometimes they build a quick spreadsheet automation. Sometimes they spin up a tracking app over a weekend. Sometimes they connect a model to a workflow and quietly start running production decisions through it. The capability is welcome. The governance position around it is the live question.

A maritime example sharpens the point. A yacht manager working shoreside could, today, build a charter enquiry tracking application using Lovable and a free Supabase database. The application would work. It would store guest data, charter terms, financial figures, and operational notes. It would also sit outside the organisation's IT environment, its data residency obligations, its incident response procedures, and any policy framework the management company has in place. The person who built it is not behaving recklessly. They are solving an operational problem with the tools now available to them. The same application, built with the same tools but inside a sanctioned process, with credential management handled centrally, data flowing into the organisation's approved environment, and security review applied before launch, becomes a sanctioned asset rather than a quiet exposure. The question is whether the organisation around the person has the policy framework that distinguishes between the two.

The same dynamic appears across our industry. Crew members onboard drafting compliance documents in ChatGPT. Shoreside teams running guest itineraries through translation models. Brokers and other professional services firms using AI to summarise charter agreements. Each instance is a productivity gain. The aggregate is an organisational AI footprint that almost no maritime business has formally mapped.

Where the Line Sits

The line sits between what a capable person can build and what an organisation should depend on.

A capable person with a vibe coding tool can build a prototype. With the right discipline, they can build a production system. Without it, they can build a liability that looks polished enough to be promoted into operations before anyone notices. The speed of creation has changed. The standards for fitness, security, integration, and accountability have not. The organisations that adopt vibe coding well will be the ones that recognise both the capability and the discipline as part of the same conversation.

The organisations that read the SaaSpocalypse correctly will not pivot toward replacing their core systems with weekend builds. They will look at the underlying signal. The cost of building software has dropped, which means the volume of software being built inside their organisation is rising, often without their knowledge. The governance task is to map that quietly emerging footprint and bring it under structured oversight before it becomes a liability.

Where to Begin

The work begins before the tools. An organisation cannot govern what it has not named. The foundation is a structured AI adoption position, documented, owned at the executive level, and supported by a sequenced roadmap. For individual leaders, that foundation often starts with building personal AI literacy through the Academy or a done-with-you working environment for executives. Everything else builds from there.

That position needs to answer specific questions. Which tools are sanctioned and which are not. What data may be put into a generative model and what must never be. Who reviews software built internally before it touches production. What the policy is on prototypes promoted to live use. How a vibe-coded internal tool is treated differently from a prototype demonstrated in a meeting. Where the boundary sits between an individual using AI well and an organisation depending on something that needs to be properly governed.

These questions do not require an organisation to slow down. They require it to be clear-eyed about what is already happening and to build the documented foundation that lets the productivity gains compound without compounding the risk alongside them.

The cost of building software has fallen sharply. The standards for governing it have to rise to meet it. Organisations that build that structure now, and the software vendors who serve them, will set the standard for those who follow.

Our industry is built on exceptionalism. AI adoption demands the same.

Kristina Agustin is the Founder and Principal Digital Navigator of Southern Sky AI, a governance-led AI adoption advisory practice serving maritime leaders.

If you would like to map your organisation's emerging AI footprint and bring it under structured oversight, the Compass AI Blueprint is where we start. Learn more about the Blueprint.

Further Reading

CodeRabbit. (2025). State of AI vs Human Code Generation Report. coderabbit.ai/whitepapers/state-of-AI-vs-human-code-generation-report

Wiz Research. (2026). Hacking Moltbook: AI Social Network Reveals 1.5M API Keys. wiz.io/blog/exposed-moltbook-database-reveals-millions-of-api-keys

Jefferies Equity Research. (2026). SaaSpocalypse market commentary, February 2026.

National Cyber Security Centre. (2026). Vibe check: AI may replace SaaS (but not for a while). ncsc.gov.uk

The SaaS CFO. (2026). The SaaSpocalypse: AI Agents, Vibe Coding, and the Changing Economics of SaaS.

Taskade. (2026). State of Vibe Coding 2026 — Market Data and Adoption Metrics. taskade.com/blog/state-of-vibe-coding-2026